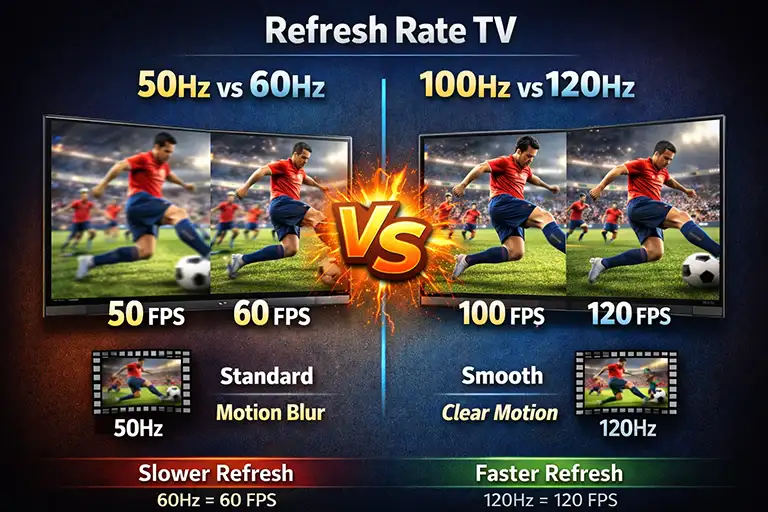

When comparing TVs labeled 50 Hz or 60 Hz and 100 Hz or 120 Hz, it may seem that these are different models. In practice, these TVs are essentially the same. The division into 50/60 Hz and 100/120 Hz is arbitrary and exists mainly for the convenience of users and in accordance with regional standards, more on this below.

Despite the many marketing parameters, there is only one parameter that truly determines a TV’s capabilities. This parameter is the refresh rate, which determines how many frames per second the TV can display.

The refresh rate shows how often the TV updates the image on the screen. Simply put, it reflects the actual frame rate that the display can handle. This value directly depends on the quality and design of the screen installed in the TV.

For example, a 60Hz panel refreshes the image 60 times per second, while a 120Hz panel refreshes it 120 times per second. A higher refresh rate provides smoother motion, which is especially noticeable in fast-moving scenes.

Until 2017, it was impossible to transmit video to a TV at a frequency higher than 60 Hz. The video standards used at the time limited both recording and transmission to a maximum of 60 frames per second. The HDMI port standards available prior to this period also only supported video signals up to 60 Hz. As a result, even TVs equipped with 100 Hz or 120 Hz panels could not fully utilize their capabilities when receiving content from external devices.

With the introduction of new HDMI standards, higher frame rate video became possible, allowing modern TVs to take full advantage of 100Hz and 120Hz panels.

50 Hz vs. 60 Hz

If a video has a frame rate of 60 Hz, how will it be displayed on a TV with a 50 Hz panel? In practice, everything works fine. Modern TVs are designed to process video signals up to 60 Hz without any problems, regardless of whether they are sold as 50 Hz or 60 Hz models.

The difference between 50 Hz and 60 Hz is due to electrical standards that date back to the era of cathode ray tube televisions. In Europe, the AC frequency was standardized at 50 Hz, while in the US it was standardized at 60 Hz. Early analog TVs with CRT screens synchronized image generation with the frequency of the power grid, as it was stable and strictly maintained within a narrow range. The image refresh rate was taken from the electrical grid.

Over time, technology has evolved. Modern TVs no longer use the mains frequency to synchronize the image. However, the concepts of 50 Hz for Europe and 60 Hz for the US have become so familiar to consumers that manufacturers continue to use these designations to indicate the frame rate.

Today, a modern TV can display video at any frequency up to 60 Hz without any problems. At the same time, the display panel itself has its own refresh rate. When a manufacturer specifies 50 Hz or 60 Hz, they are essentially guaranteeing stable image quality at that refresh rate.

100 Hz and 120 Hz TVs

Support for 100 Hz or 120 Hz is mainly a technological improvement introduced with new standards. Since 2017, the HDMI 2.1 standard allows video to be transmitted at frequencies up to 120 Hz. This allows the TV to be used more effectively as a monitor, especially for games and content with high frame rates.

In practice, there is no real difference between a TV labeled 100 Hz and a TV labeled 120 Hz. For the European market, manufacturers usually specify 100 Hz support, and for the US market, 120 Hz. Technically, these TVs are the same.

Therefore, in countries where the electrical network operates at 50 Hz, manufacturers use 50 Hz or 100 Hz. In countries with a 60 Hz electrical network, they use 60 or 120 Hz.