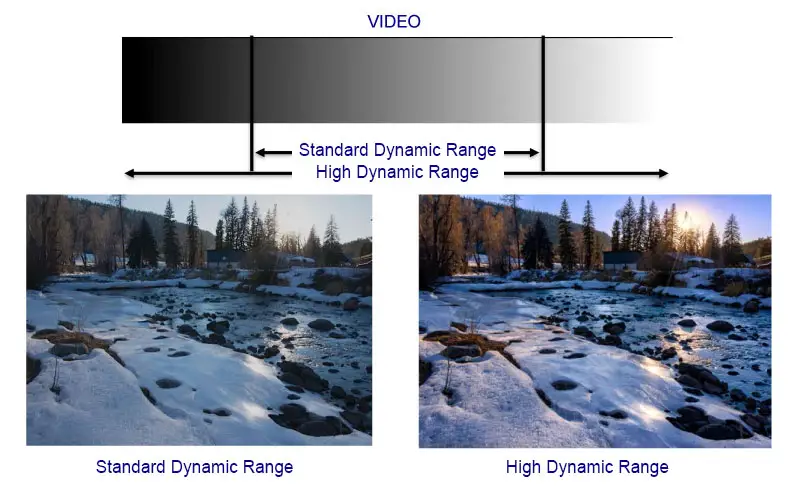

In 2016, HDR (High Dynamic Range) support became the most advertised feature in the television industry. HDR allows videos to be displayed with a wider brightness range and more detailed colors. This technology did not appear overnight. Work on improving display quality began back in 2010. It became clear that screens would soon reach a level where standard video formats would lag behind displays. To solve this problem, the industry decided to improve video content by adding additional HDR data.

By 2016, QLED and OLED TVs had entered the market. These TVs could display up to a billion shades thanks to 10-bit color depth and had built-in software designed to properly process HDR content.

What you need to know about HDR

HDR works through metadata embedded in video codecs. This metadata is added during filming or generated in real time, such as when a game console creates a video stream. You may wonder why metadata is needed instead of simply putting all the information directly into the video itself.

The reason is simple. HDR is designed for displays that support 10-bit color depth. An 8-bit display can show 256 levels of brightness, while a 10-bit display can show 1,024 levels, which is four times more. If a video was created exclusively for 10-bit screens, it will not display correctly on 8-bit TVs.

Another important factor is video compression. Codecs reduce file size by grouping pixels and assigning specific color values to a block of pixels, rather than processing each one individually. In addition, image quality during shooting depends largely on lighting. For example, if you shoot a table lamp shining in a dark room with a long exposure, the lamp will be clearly visible, but most of the room may remain in darkness. If you increase the sensitivity, more details in the room will become visible, but the lamp may turn into a bright spot that overshadows nearby objects.

Due to these limitations, video is produced in formats that remain compatible with different types of TVs. You can watch HDR content even on older models released before HDR became widespread. However, modern TVs with higher brightness and a wider color gamut can display this content with much greater detail and contrast.

Simply put, HDR video contains additional data that is processed by the TV. Using special algorithms, the TV adjusts the brightness and contrast for specific areas of the screen. As a result, the image looks more natural, detailed, and visually impressive.

Overview of the features of different HDR formats

| HDR Generation | Color Depth | Brightness (nits) | Metadata | Year Developed | Features |

|---|---|---|---|---|---|

| Dolby Vision | 12-bit | Up to 4000+ | Dynamic | 2014 | Supports 12-bit color depth, requires compatible hardware |

| HDR10 | 10-bit | Up to 1000 | Static | 2015 | Basic standard, one setting applied for the entire video |

| HLG (Hybrid Log-Gamma) | 10-bit | Depends on display | No metadata | 2016 | Designed for broadcasting, compatible with both SDR and HDR displays |

| Advanced HDR by Technicolor | 10-bit | Up to 1000 | Dynamic | 2016 | Offers flexibility in content production and broadcasting |

| HDR10+ | 10-bit | Up to 4000 | Dynamic | 2017 | Enhanced version of HDR10, adjusts brightness and contrast for each scene |

There are several HDR standards on the market, and as with most new technologies, competition between formats is inevitable. At the same time, it is TV manufacturers who decide which HDR formats their models will support.

Dolby Vision is considered the most advanced HDR format. It was developed with significant potential for future development in mind and supports color depths of up to 12 bits. Although 12-bit TV panels are not yet available, this format already allows brightness and contrast to be adjusted for each frame. This dynamic metadata provides very precise image control.

HDR10 is a simpler and more widely used format. In this case, HDR metadata is added once at the beginning of the video and remains unchanged throughout playback. It does not adjust settings for each scene or frame, but still provides noticeably improved brightness and color compared to standard video.

HLG (Hybrid Log-Gamma) takes a different approach. It does not use metadata at all. Instead, brightness information is encoded directly into the video signal using gamma curves during filming. When played back, the TV reproduces as much of the brightness range as its panel allows, ignoring levels it cannot display. This makes HLG particularly suitable for television broadcasting.

Advanced HDR by Technicolor is another broadcast-focused format developed by Technicolor. However, it has not gained widespread popularity and is generally considered less advanced than Dolby Vision and HDR10+.

HDR10+ is similar in concept to Dolby Vision. It uses dynamic metadata to adjust brightness for each scene, but is designed for 10-bit displays, which are now standard in modern HDR TVs.

Today, the two most common HDR formats in video production are HDR10+ and Dolby Vision. These two standards compete most actively with each other, each supported by different manufacturers and content providers.